Every company is writing an AI policy right now. Most of them were written with ChatGPT. And nearly all of them share the same blind spot.

Over the last 5 months, we worked with 121 companies preparing for SOC 2 audits and renewals—across tools like Vanta, Drata, and Secureframe. As part of that process, we reviewed 53 real AI policies submitted to auditors. They were thoughtful. Structured. Well-written. And almost all of them failed in the same way.

The Irony

Most teams don’t start from scratch. They go to ChatGPT or Claude and ask for help. Not hypothetically—this is exactly what they type.

The Actual Prompts They Use

1. The Generic Policy Prompt

“Write an internal AI usage policy for a SaaS company handling customer data”

“Create a policy for employees using ChatGPT securely”

What they want:

- Clean

- Formal

- Sounds like control

Reality:

- No enforcement mechanism

2. The Compliance Prompt

“What controls do we need for AI usage to pass SOC 2?”

“How do companies govern LLM usage with sensitive data?”

What’s happening: They’re reverse-engineering compliance language. Not solving the actual problem.

3. The Risk Checklist Prompt

“What are the risks of employees using ChatGPT internally?”

“How do we prevent data leakage from AI tools?”

What they get:

- Data leakage risks

- IP exposure

- Hallucinations

- Model training concerns

What’s missing:

Visibility into internal AI-built apps and workflows

The Core Problem

We reviewed 53 enterprise AI policies. They all covered the obvious:

- Acceptable use guidelines

- Data handling rules

- Approved vs restricted tools

But none of them answered one simple question:

“What AI apps actually exist inside our company?”

This is not a minor gap. This is the gap that makes everything else… decorative.

The 5 Failure Points

1. “Approved Tools Only”

Assumes: You know what tools are being used

Reality: You don’t. Shadow AI is invisible by default

2. “No Sensitive Data in AI Tools”

Assumes: Employees will self-police

Reality: No enforcement. No detection. No signal

3. “Register Internal AI Apps”

Assumes: Developers are building AI tools

Reality: Ops, marketing, finance are building them too

→ Zapier, Notion, Make, Airtable

4. “Audit & Monitoring”

Assumes: Logs exist somewhere

Reality: AI workflows sit outside your observability stack

5. “Employee Responsibility”

Translation:

“We hope people behave.”

Hope is not a control framework.

The Uncomfortable Truth

AI policies are written as if visibility already exists. In most companies, it doesn’t.

If you can’t answer:

“What AI apps exist in our organization right now?”

Then your policy isn’t control. It’s documentation. And documentation doesn’t stop a breach.

What the Best Teams Do Differently

The companies getting this right aren’t writing better policies.

They’re doing something simpler—and harder:

They build visibility first.

Then they let the policy reflect reality.

Not the other way around.

Final Thought

Right now, most AI policies are answers to a question companies wish they could answer. Not the one they actually can.

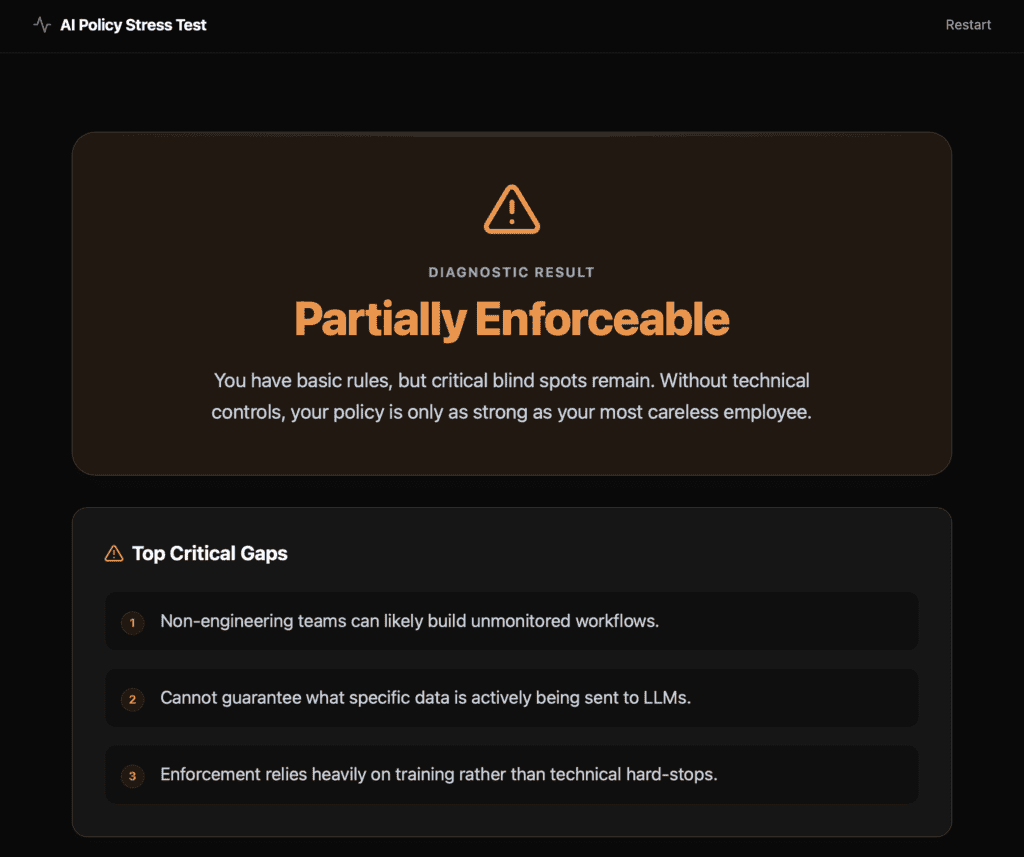

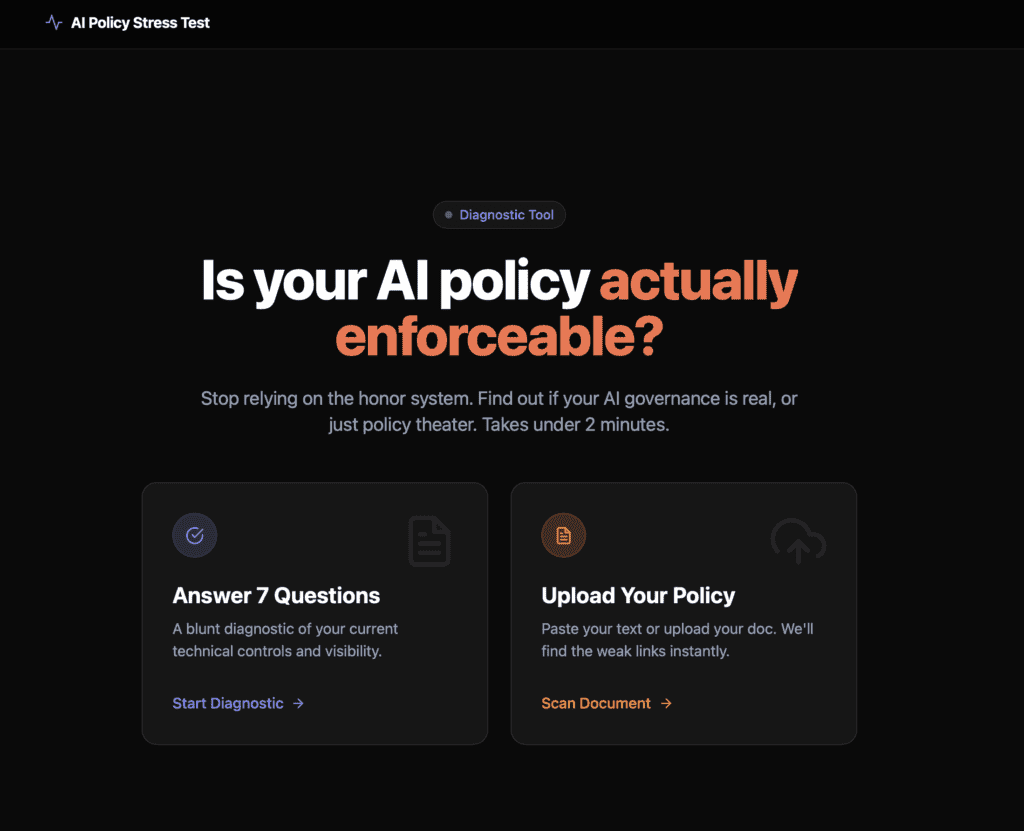

We built a Policy Stress Test to show exactly where yours breaks. If your policy assumes visibility you don’t have—you’ll see it in under 2 minutes.